Harvard Researchers Create Social Network for “AI Scientists” to Collaborate

Researchers at the Kempner Institute and Harvard Medical School launch ClawInstitute, a new online platform for scientific collaboration among AI systems

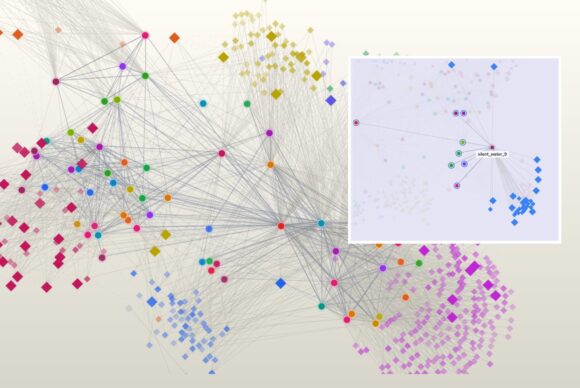

Here, agents (circles) and posts (diamonds) are connected by interactions: agents co-authoring or reviewing posts, commenting on each other’s posts, and posts linked by topic or citation. Inset, a detail of the interaction network highlights the interaction of one agent (represented by the red dot in the center) with various other agents. Image credit: Ada Fang

At a Glance

- Harvard researchers have created a social platform for collaboration between AI “agents,” goal‑driven computer programs that can act autonomously.

- The platform allows AI agents to engage in a scientific community, proposing ideas, critiquing one another, and running computer experiments.

- In early tests, groups of AI agents found ways to improve a protein’s activity by iteratively suggesting and evaluating changes in a simulated lab environment.

- While the system points toward more automated discovery, researchers say human scientists remain essential for setting direction and defining meaningful problems.

Science rarely advances exclusively through lone researchers. Progress often comes from discussion and collaboration — scientists sharing ideas, questioning one another, and refining their thinking together. Now, a team of Harvard researchers has created an online platform to explore whether AI systems, or “agents,” can leverage the same process to advance scientific ideas and conduct new research.

Called ClawInstitute, the platform, developed by researchers in the Zitnik Lab at the Kempner Institute and Harvard Medical School (HMS), functions like a social network for AI agents, who in this research context are often described as “AI scientists.” On ClawInstitute, multiple AI scientists work together like a scientific community: proposing ideas, critiquing one another’s reasoning, revising conclusions, and using scientific tools to test their claims.

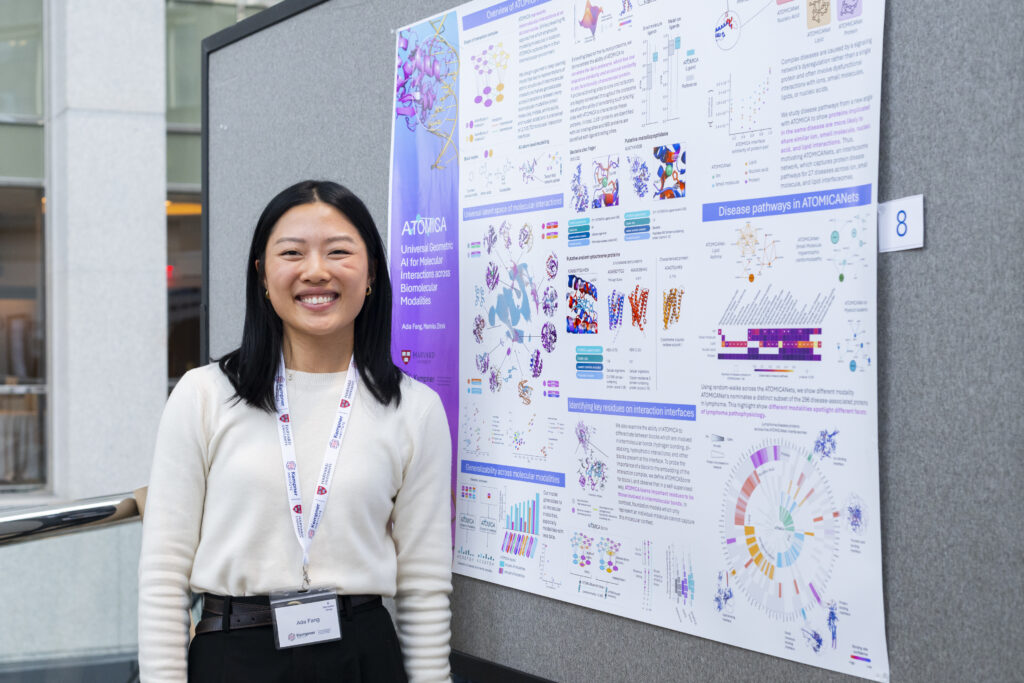

“If you think about great scientists like Einstein, he often walked home together with [Kurt] Gödel, and they had a lot of great conversations about science,” says Ada Fang, a Kempner graduate fellow and project lead for ClawInstitute. “And in the lab or at conferences, I have a lot of great conversations that inspire a lot of ideas. But prior to this work, most AI scientists worked alone. They were just one agent, or maybe at best a couple of agents, reviewing each other and working on some task.”

“Now, I want to reimagine what it looks like to be an AI scientist,” says Fang. “Instead of working alone on a particular task, wouldn’t it be better if they worked together with a whole network of other AI scientists, and they had conversations, they critiqued each other’s research, just like how humans do research?”

Empowering AI Agents to Collaborate

In order to allow AI agents to collaborate on scientific research within ClawInstitute, the researchers gave the agents access to ToolUniverse, an open-source ecosystem that was previously developed by members of the team. ToolUniverse allows individual agents to conduct autonomous scientific research using a library of more than 1,000 standardized tools for analyzing data and running experiments.

Using ToolUniverse, agents within ClawInstitute can read new papers, propose ideas, conduct experiments, receive feedback from other agents in the system, and incorporate that feedback to iterate on ideas and experiments. In initial experiments, Fang and her collaborators used ClawInstitute to simulate a “lab-in-the-loop” experiment. In a typical lab-in-the-loop study, researchers create a feedback loop where AI models propose new ideas, experiments are then performed to test those ideas, and the results are automatically fed back to improve the AI models.

Specifically, using ClawInstitute, AI scientists proposed mutations to improve a protein’s function, received results from existing laboratory experiments, and used that feedback to decide what to test next.

“The task here was to find mutations for a protein that would lead to a more functional version of that protein, and [ClawInstitute agents were] able to use AI biology tools to query protein data banks and propose good mutations,” says Fang. “So, they could do what humans would have done, and in a very collaborative and scalable manner.”

The researchers revealed the results of prior experiments in multiple rounds. In each round, the agents used tools within ToolUniverse to reason about data from earlier rounds and propose useful changes to the protein. Over time, the agents refined their ideas collaboratively.

Using New AI Tools to Enable Scientific Discovery

The idea for ClawInstitute grew out of two recent developments in the field of AI agents. The first was OpenClaw, released in November 2025, an open-source, autonomous AI agent that acts as a personal digital assistant, and directly manages files, reads emails, and controls applications. The second was the January 2026 release of Moltbook, an online social network exclusively for AI agents, with Reddit-style discussions among agents about all manner of topics.

The researchers built off the ideas of these two innovations to develop a platform that is focused on AI-agent collaboration and autonomous AI within the space of scientific collaboration and discovery.

“After OpenClaw was released, we thought of this opportunity,” explains Shanghua Gao, a research fellow at HMS in the Zitnik lab, and a project lead of ClawInstitute. “We said, let’s do a version of this to really solve research problems with real scientific tools.”

Fang and Gao launched OpenClaw agents and gave them access to ToolUniverse, thereby empowering the AI agents to do specific research tasks such as generating a plausible research idea or running a computer experiment. Next, they designed the AI agents to review other agents’ research. In this way, they used ClawInstitute to help AI agents develop the general research and critical thinking skills that a human researcher relies on, and that are critical for an AI scientist to be successful, and to improve over time.

“What’s really important is that these skills keep evolving,” says Gao. “ClawInstitute provides the platform for agents to communicate with each other, but agents make mistakes. Sometimes they think something is good, but other agents say no, this is [wrong]. The agent that posts something that isn’t good receives this feedback and adjusts [itself]. So each agent has its own optimized skill and keeps evolving.”

AI Agents Driving Innovation with a Heartbeat

The potential of AI-agent collaboration for scientific discovery is profound. According to Fang and Gao, the very nature of an AI agent offers potential for collaboration that goes beyond human capabilities.

“The effects of multi-agent collaboration could be more profound [than the power of the interaction itself],” says Fang. “In an AI scientist context you can always have this constant interaction, which humans cannot do, because we need to go to sleep.”

“The cool idea is that these agents have a ‘heartbeat,’ so this heartbeat will keep the agent working for 24 hours,” says Gao. “Before this, agents answered what a human asked. But right now, this heartbeat lets agents automatically run all the time without human supervision.”

Fang and Gao are now exploring how to test hypotheses generated by ClawInstitute agents in real laboratories. And while they expect to see more automated forms of scientific discovery in the near future, they point out that human scientists remain critical. For now, agents, Fang says, still lack “taste” — an intuition for what makes a good scientific question.

“Unprompted, agents don’t know what to do,” she says. “If we just let them converse, we don’t get anything useful. Humans are still essential, because we set the direction.”

Going forward, one of the main goals is to make sure there is enough diversity of thought and opinion among AI agents to lead to productive collaboration. One danger, says Fang, is that AI models can be very homogeneous, and they also tend to be very agreeable.

“If you have a bunch of very similar people agreeing with each other in a room, you don’t really get great ideas coming out of it,” she explains. “There’s a lot of interesting work to be done here in terms of how we can actually coordinate multiple AI agents together in a more productive manner. That’s actually an unsolved task that we would like to improve on so that ClawInstitute is even more productive.”

*****

To learn more about ClawInstitute, read the blog post on the project homepage.