What Happens When AI Isn’t Trained, But Evolves?

By letting artificial agents evolve in virtual environments, Kempner researchers see intelligent behaviors emerge on their own

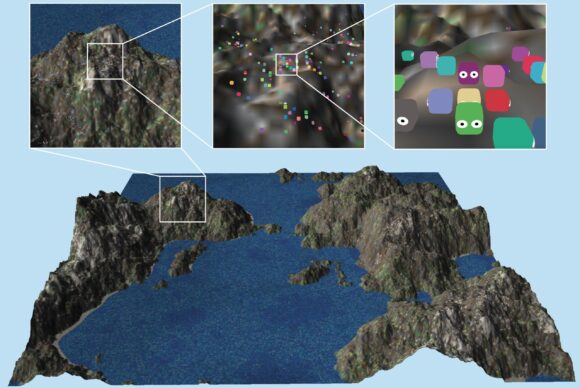

Overview of one of the simulated virtual environments. This 'grid world' contains 1024 × 1024 grid cells and 10,000 individual agents, which are too small to see when fully zoomed out. The inset areas progressively zoom in on one patch of terrain to reveal individual agents and map details.

Image credit: Aaron Walsman

At a glance

- Researchers found that artificial agents can develop intelligent behaviors without being trained on explicit goals or reward signals.

- Rich sensory inputs and large, stable environments were key to the emergence of behaviors like long-distance resource gathering.

- The work suggests that scaling up virtual environments may offer unique insights into the evolution of intelligence.

Over the past two decades, progress in artificial intelligence has involved a reliable recipe: define a clear goal for an AI system and teach it to improve on that objective using ever-larger datasets. Alongside advancements in system architecture and computing power, this approach — known as machine learning (ML) — has produced systems that can translate languages, write computer code, and recognize images with superhuman accuracy. But it leaves open a deeper question: must artificial intelligence always be taught, or can it emerge on its own from the pressure to survive in an environment?

A team of researchers at the Kempner Institute for the Study of Natural and Artificial Intelligence is exploring that question. A recent study, led by Kempner Research Fellow Aaron Walsman and first authored by Joseph Bejjani, finds that intelligent behaviors can emerge even when there is no specific goal or objective — as long as the AI systems can sense the right information about their virtual environments, and those environments are big enough to allow for stable evolutionary change.

The results offer an early demonstration of the emergence of intelligent behavior in artificial systems through evolution, rather than through teaching the systems with data provided by humans. By investigating how sensory information and environmental scale influence evolution in virtual environments, Walsman and his colleagues are building a new paradigm for AI research that could deepen our understanding of the emergence of natural intelligence. Their approach could eventually provide researchers with a new way to create smart “virtual lab rats” that scientists can probe further in experiments aimed at a basic understanding of intelligent behavior.

AI that evolves through natural selection

At the heart of the project is a deliberate departure from standard machine learning practice. In a typical ML framework, researchers specify what success looks like for a model — for example, maximizing reward or minimizing errors. The model, also known as an agent, receives feedback about its performance through a learning signal, which it uses to adjust its internal structure over time.

In the approach Walsman and his team are pursuing, there is no such stated objective or learning signal — only survival of the fittest. A population of AI agents are allowed to evolve in virtual environments, transforming solely through a simulated version of biological natural selection.

“In our project, we just let the agents evolve naturally,” says Walsman. “There’s no learning signal, so the only way an agent changes is through mutation.”

The agents in the study are simple software programs, each controlled by a small artificial neural network that serves as its brain, detecting information from its environment and selecting actions in response. Walsman and his team simulate agent evolution in a variety of environments, each of which is a virtual “grid world” — a chessboard-like grid of squares that may contain resources, obstacles, or other agents. These virtual worlds, constructed with computer code, are like levels in a video game, and the agents are like players.

To survive, each agent in the population must move through the grid to gather food and water, avoid hazards, and reproduce. When an agent reproduces, random mutations are applied to its offsprings’ neural networks, subtly altering how the next generation processes information and acts.

“Agents that are better at gathering resources reproduce more, so their traits spread through the population,” says Walsman.

The study mirrors biological evolution by natural selection: new characteristics or traits arise through mutation, and the environment “selects” the traits that help with survival.

The impact of better sensors and larger environments

In the study, researchers focused on a basic scientific question: what conditions are necessary for particular behaviors to emerge in a given evolutionary system? To answer this, the team systematically varied two factors: the quality of the agents’ sensory systems and the scale of the environment they evolve in.

Some agents could only pick up information about the square they occupied, while others were equipped with richer sensors, with, for example, the capacity to see nearby squares, or with a compass-like sense of direction. The team also ran experiments in grid worlds of different sizes, from small arenas to much larger environments supporting tens of thousands of agents interacting over millions of time steps.

Certain intelligent behaviors, including the ability to travel long distances for food and water, proved especially sensitive to the two factors that Walsman and his team focused on: sensor quality and environmental scale. In environments where essential resources were separated — for example, food-rich areas positioned far from water sources — agents with better sensors reliably evolved a more sophisticated strategy to travel long distances. Similarly, better sensors impacted whether the agents evolved the ability to fight with each other. The ability to attack and defeat a rival agent provides a possible strategy for agents to compete for scarce resources: the winner gets all the contested resources on a grid square.

Walsman and his team also discovered that the scale of the environment mattered as much as the quality of the agents’ sensors. In small environments, outcomes were unpredictable: useful behaviors sometimes emerged, but they could just as easily disappear due to early random events or the spread of mutations that proved harmful in the long run. Larger environments were more stable, supporting populations with enough of a buffer to withstand these disruptions.

“When the population is larger, you’re less likely to get wiped out by something pathological early on,” Walsman says. “The system has more time to recover, and for interesting behaviors to establish themselves.”

What does it take for a virtual “octopus” to evolve?

Walsman emphasizes that the work is not aimed at producing a new way to engineer AI systems in the near term. “What we’re really trying to do is uncover what you need, from the environment and from the agents themselves, for certain behaviors to emerge,” he says.

The project also highlights a different way to think about scale in AI research. While much attention has focused on scaling up model size and training data, Walsman and his colleagues have demonstrated that the size and complexity of environments may be just as important.

Currently, the evolutionary simulations run on individual high-end GPUs — the hardware processors behind the ongoing AI revolution. Each GPU is capable of supporting populations of tens of thousands of agents with neural network “brains.” The team is now working to expand these virtual worlds by distributing simulations across multiple GPUs on the Kempner AI Cluster, allowing agents to move and interact over even larger spatial and temporal scales.

Walsman is excited by the prospect that as the scale of the evolutionary experiments grows, the AI agents will start to show advanced behaviors, perhaps analogous to those of particularly intelligent animals, like octopuses or birds.

“What does it take to build a [virtual] octopus, or a bird?” Walsman asks. “Is it the scale of the entire world, or can you see something like that emerge in a relatively small but well-designed environment? We don’t know yet.”

Inspiring deeper investigations into these kinds of questions, the study offers an important proof of principle: even in simple virtual environments, evolutionary processes can give rise to intelligent behaviors without rewards or predefined goals. As AI continues to scale, some future advances may come, not from tighter supervision, but from allowing systems to find their own way in complex environments.