How the Brain and AI Reuse Old Knowledge in New Situations

A study published in the journal Nature Neuroscience reveals that the geometry of brain activity patterns determines how well knowledge transfers to new situations, and presents a single mathematical formula that captures this in both brains and AI systems

Artwork: Kouzou Sakai for Simons Foundation

At a glance

- The study introduces a new theory that connects the geometry of neural activity to generalization, or how well knowledge transfers to new tasks.

- A single formula shows how four geometric properties of neural representations jointly determine generalization.

- Across artificial neural networks and experimental data from rats and monkeys, the same geometric properties accurately predicted generalization performance, revealing commonalities between artificial and biological intelligence.

- The research shows that the geometric properties that best support generalization change over the course of learning, with lower-dimensional, more compressed neural codes favored early on and higher-dimensional, more disentangled codes becoming advantageous as experience accumulates.

Humans and other animals are remarkably good at using old knowledge in new situations. This ability — known as generalization — allows us to recognize a friend in an unexpected setting, brake at a stop sign in an unfamiliar town, or apply a math rule to a new problem. Although neuroscientists have identified brain activity patterns linked to this skill, they have lacked a unifying mathematical theory that empowers them to understand and predict generalization performance.

Kempner Institute Investigator SueYeon Chung, who is also an assistant professor of physics and of applied mathematics at Harvard, aims to fill that gap. In a study recently published in Nature Neuroscience, she and her collaborators — first author Albert J. Wakhloo, a Ph.D. student in neuroscience at Columbia University, and second author William Slatton, a Ph.D. student in neuroscience at Harvard — introduce a mathematical theory that reveals how the geometrical structure of neural activity patterns governs generalization in both brains and artificial intelligence systems.

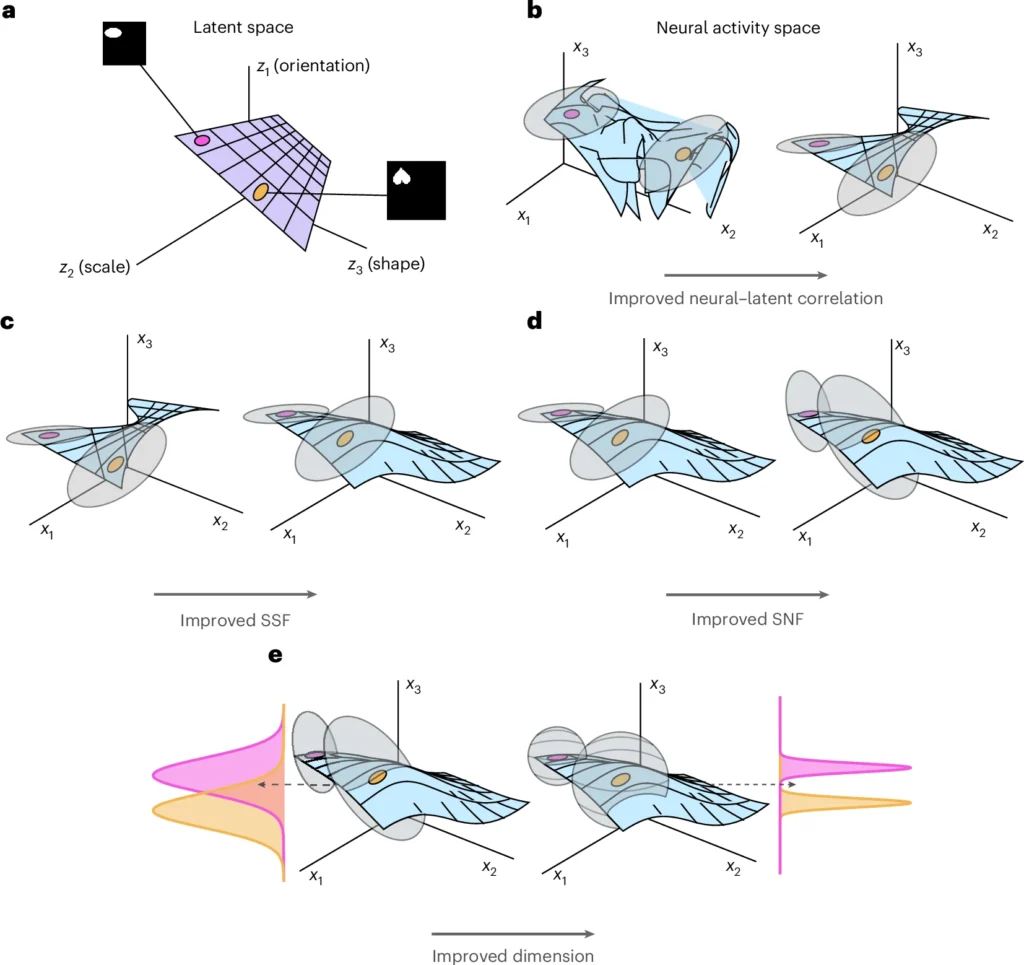

At the heart of their new theory are neural representations — patterns of internal activity in a brain or in an AI model, that encode information. These patterns form a kind of geometric landscape: they have shape, spread, and orientation in a high-dimensional space. The team found that four measurable properties of this geometry jointly determine how well knowledge transfers across tasks, and presented a single formula that captures this relationship. The study marks the first time these four properties have been brought together in a single mathematical theory of generalization.

“By looking at the geometry of neural activity, we can now say something precise about how well a brain or an AI system will generalize,” Chung says. “It gives neuroscientists and AI researchers a concrete set of quantities to measure and optimize.”

Chung and her collaborators tested their theory in artificial neural networks — the software “brains” behind many modern AI systems — as well as in rats and monkeys. In both biological and artificial systems, the theory successfully predicted performance on tasks that required generalization of prior knowledge.

How the Geometry of Neural Codes Changes During Learning

The study also revealed a key insight about learning: the geometric properties of optimal neural codes change over time. Early in learning, when examples are scarce, the best strategy is to compress the neural representation, focusing on the most informative features while suppressing less relevant ones. As experience accumulates, the optimal code expands, devoting neural resources to representing an increasingly complete, and less compressed, picture of the environment.

“When you’re in an early stage [of learning], it’s better to have simple representations, so you can quickly learn the important features of the world,” says Chung. But as experience accumulates, more detailed representations become advantageous. “So the properties that minimize [generalization] error depend on where you are in the learning process.”

Strikingly, the team found evidence for exactly these predicted trends in both artificial neural networks and in recordings from rat brains during learning.

With these results, Chung and her team provide a new mathematical lens for interpreting large-scale neural data and for understanding how learning shapes internal representations in both biological and artificial systems.

Four Geometric Properties of Accurate Generalization

The mathematical framework that Chung and her team developed gave them a way to estimate whether a neural representation would be conducive to generalization. Using this framework, they derived a formula that predicts generalization performance using four known geometric properties of neural representations.

The first property, “neural-latent correlation,” measures how faithfully neural activity tracks meaningful information in the environment. The second, “neural dimension,” captures how many independent directions the neural activity pattern spans, and whether it creates a low-dimensional representation akin to a simple sketch versus a high-dimensional representation analogous to a richly detailed map. The third is “signal-signal factorization,” and measures disentanglement: whether different features of the world, say an object’s shape versus its size, are represented along independent, non-overlapping neural directions. The fourth, “signal-noise factorization,” measures how effectively the neural representations tease apart meaningful signals from random noise or irrelevant variability. Although earlier studies had associated each of these properties with generalization, no single framework unified them.

One surprising finding from the geometric analysis is that these four properties can trade off with one another. For example, increasing the dimensionality of a neural representation can come at the cost of reducing its correlation with the environment. This means that two very different neural codes can produce the same overall generalization performance, but for different geometric reasons. The theory gives researchers a way to look under the hood and understand why a neural system generalizes well, not just whether it does.

The researchers tested their mathematical framework in two types of artificial neural networks and in neural activity data from rats and monkeys. In each case, the theory allowed the team to predict generalization performance.

Shared Principles in Brains and Machines

The study arrives as new technologies increasingly allow neuroscientists to record from thousands of neurons simultaneously, producing vast datasets. Because the theory provides concrete, measurable geometric quantities, it gives experimentalists specific features to look for when analyzing these large-scale recordings, contributing to a more complete understanding of how the brain organizes information in order to generalize.

The findings highlight parallels between biological brains and AI systems. Despite their different structures, both appear to rely on similar geometric principles when they generalize accurately. These parallels could inform efforts to design AI systems that transfer knowledge more consistently and reliably — a challenge even for the most advanced AI tools.

Chung describes this work as the beginning of a growing research program. “I’m very excited to continue developing these types of theories and engage with many experimental labs to help understand their data, and also to interpret AI systems and their internal representations.”